We Should Not Have to Prove We're Human to Sam Altman

Worldcoin, Sam Altman, privacy, and why I hate being right sometimes.

Yesterday, I saw this in my feeds and almost fell off my chair:

For context, here’s the BBC’s take (emphasis mine):

Tinder will let users prove they are human and not robots by bringing advanced eye-scanning technology to the app amid rising fears over AI.

Users of the dating app, as well as other major platforms such as video calling service Zoom, will be able to scan their irises to earn a “proof of humanity” badge attached to their profile or name.

Through either an online app or an orb-shaped scanning device run by the World network people can submit to a scan of their iris, the coloured portion of the eye, in order to confirm they are human.

World, formerly known as Worldcoin, is part of Tools for Humanity, a start-up co-founded and chaired by Sam Altman, who is also the head of ChatGPT-maker OpenAI.

Once a person is confirmed as human by the technology they receive a unique identification code, which is stored on their smartphone and considered their World ID.

As Sam Illingsworth over at Slow AI observed, Sam Altman has manufactured an AI-shaped problem he helped create, and he is now trying to peddle his World eyeball-scanning-orb-cum-crypto-token as a solution.

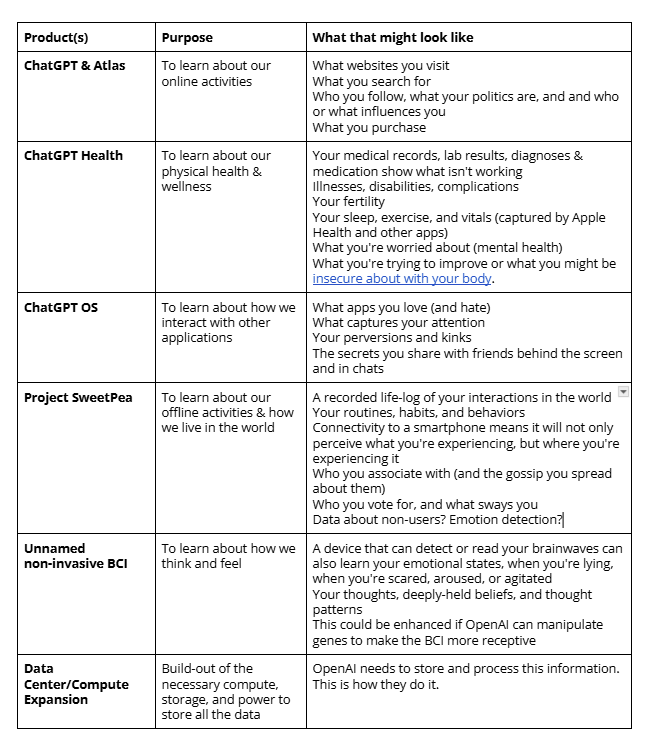

This is precisely the concern I explored in The Helpful Ladder section in Part 2:

In fact, in early drafts of this part of the article, I had included World as a step on the ladder of how OpenAI would connect its various tools to obtain a complete picture of you. The thing is, I was never able to explicitly connect World to OpenAI’s more overt data grabs. Aside from the fact that Alex Blania is a co-founder of BCI company Merge Labs (which received seed funding from OpenAI in January) and co-founder (with Altman) of the Worldcoin Foundation (the data controller) and Tools For Humanity (owners of the actual Orbs), I hadn’t found any actual evidence.

Ever worried about sounding like an absolute crank, I kept it out, and I really regret that I doubted myself.

I like being right, but not like this

As Husbot knows, I love being right about things, but I hate being right about this, because it’s an awful thing to be right about. This is why I call myself Privacy Cassandra — I can see that we’re headed in a dark, rights- and agency-eroding direction, but nobody ever believes me.

We shouldn’t need to have our irises scanned by a company that has been under investigation or shut down by regulators in at least six countries. We shouldn’t need to prove we’re human1 to go on dates, buy concert tickets, purchase goods, sign a document, attend a Zoom call, or vote. We certainly shouldn’t need to trade our biometric data to Sam Altman for a bullshit token that’s worth fractions of a cent and will only, at best, ever meaningfully enrich the billionaires who own 23% of the token stake.2

I’m angry this is a thing at all.

I’m frustrated that members of the press aren’t reporting on this critically enough, beyond alluding to “privacy concerns”. Did anyone ask whether World has addressed any of the “privacy concerns” highlighted by regulators over the last two years, including the Bavarian Data Protection Authority (BayLDA), the Hong Kong Office of the Privacy Commissioner, or most recently the Philippines National Privacy Commission? Did anyone bother to mention that World has been suspended, forced to shut down entirely, or required to delete data collected in Brazil, Germany, Kenya, Hong Kong, the Philippines, and Thailand?

Since it’s my blog, I’ll lay out the questions that have, to my knowledge, still been left unanswered:

Does World still believe that iris codes or the encrypted biometric shards currently used to store this data, are not personal data under the data protection laws, or special categories biometric data under Article 9 GDPR?3

Does World still believe it can bypass informed user consent requirements by relying on a legitimate interests lawful3 basis in the EU?4

Can data subjects meaningfully exercise their rights to rectification, erasure, withdrawal of consent, or the right to opt out of processing?5

Similarly, can data subjects even offer informed, specific, unambiguous and freely given consent in the first place?6

Is World still storing encrypted biometric shards, historic iris codes, or other personal data? If so, can they ensure that their security controls are robust, and that they meet requirements under the data protection laws?7

I have more questions that will probably remain unanswered. I’m angry about this. I’m also angry that Sam Altman is at least partially responsible for us needing to provide “proof of humanness” in the first place.

Either way, I look forward to the next round of regulatory review which will almost certainly follow.

Originally, in the Worldcoin Foundation days, the company referred to this as “proof of personhood” but appear to have migrated away from that phrase into “proof of human” sometime in 2025, likely because “proof of personhood” isn’t catchy enough.

The Tools for Humanity Investors and Initial Development Team own a collective 23.8% stake in World (WLD) tokens. See: https://tokenomist.ai/worldcoin-wld

If this sounds crazy, know that this is a position the Worldcoin Foundation asserted in 2024 to the BayLDA: “Furthermore, the Worldcoin Foundation argued that the iris codes are not personal data, as they are not linked to the World-ID, the name or other identifiers.” (Sec. 58, BayLDA Opinion). They also argued that their secure multi-party compute (SMPC) framework meant that encrypted sharded iris data did not constitute personal data. There are lots of details in the opinion on this, starting in Section 338-380 and elsewhere.

World has since adopted a new ‘Anonymized Multi-Party Compute (AMPC)’ approach, but I have not seen a regulatory review/DPIA that addresses whether AMPCs are personal data or not. They claim that data is anonymized and secure, but also have a disclaimer at the bottom: “The above content speaks only as of the date indicated. Further, it is subject to risks, uncertainties and assumptions, and so may be incorrect and may change without notice.”

“Moreover, the Worldcoin Foundation stated that Article 6(1)(1)(f) GDPR serves as the legal basis for the processing of the iris codes. The Worldcoin project is a voluntary offer.” (Sec. 60, BayLDA opinion)

See Section 279.

The meaningful consent question was a theme brought up by many regulators, particularly in developing countries. The incentive structure encouraged individuals to trade biometric data for World Tokens.

Both the Brazilian Data Protection Authority (ANPD) and the Kenyan High Court, concluded in 2025 that the consent obtained by Orb operators from individuals, was tantamount to ‘induced consent’:

Worldcoin’s consent was induced through offering approximately KES. 7,000/= to data subjects who could not withdraw their consent without losing the Worldcoin. The High Court held that the consent was not free, specific and informed as per section 2 of the DPA.15 It emphasized that consent should be informed and free from coercion as per the Data Protection (General) Regulations.16

Language and transparency deficiencies were also routinely an issue. For example, here’s the Hong Kong Data Protection Commissioner’s opinion:

In particular, the relevant “Privacy Notice” and “Biometric Data Consent Form” were not available in Chinese, the iris scanning device operators at the operating locations also did not offer any explanation or confirmed the participants’ understanding of the aforesaid documents. They also did not inform the participants the possible risks pertaining to their disclosure of biometric data, nor answered their questions.

As of January 30, 2026, the Thai authorities certainly don’t think they did.

See Bay LDA Secs. 183-186, 410-439.

Thank you for being one of the few people I have seen to properly report on this issue. Also, there is nothing wrong with being the Privacy Cassandra, it's just a shame the the actions of others necessitates the need. 😢

World isn't a separate venture that happens to solve a problem OpenAI created. Sam is building the next nest- he's done this before- inside the current one. funded and positioned by Openai.

OpenAI generates the bots. The bots create the verification crisis. World sells the verification. World's value depends on the crisis OpenAI maintains. The cofounder structure (Blania at Merge Labs with OpenAI seed funding, Altman at both) isn't coincidence...

Use the current platform's resources, reach, and crisis to build the next platform inside it, where the next platform monetizes the very thing the current platform broke.

thank you for this article- it just connected a dot I've been looking for for a while